AI Core inconsistent inference results

Hello, I am developing a Dog Activity Recognition app with CubeAI on STM32WB55 usign AI Core 9.0.0.

So far, I have been following https://wiki.st.com/stm32mcu/wiki/AI:How_to_perform_motion_sensing_on_STM32L4_IoTnode . I am using the same sensor and ODR, same AI input size, same Model structure, but my own dataset from Google Colab.

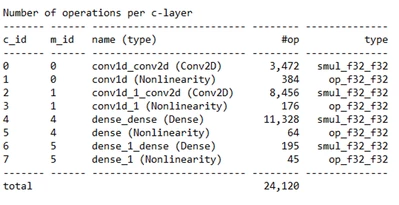

Model Structure:

Accuracy in Google Colab: 94 %.

After getting almost always the same "wrong" result in STMCubeIDE, I did the following:

- I performed validation on target in STMCubeIDE (the accuracy is similar to Google Colab, which is good:(acc=95.13%, rmse=0.145657539, mae=0.044287317, l2r=0.261498094, nse=0.905, cos=0.968

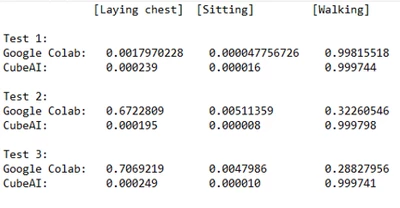

- I tested a couple of inputs manually to compare with result in Colab and this is where the problem seems to be (Test1 is OK), the results do not match quite a lot:

How this can be? The model in Colab is the same as STMCubeIDE.

Side question: does AI Core transform Conv1D layers into Conv2D, as seen in the first picure? Can this possibly be the reason?

Thank you very much!