Asking for Help: Why "inference on target" seems to be slower than expected?

I've got a simple model like this in ONNX:

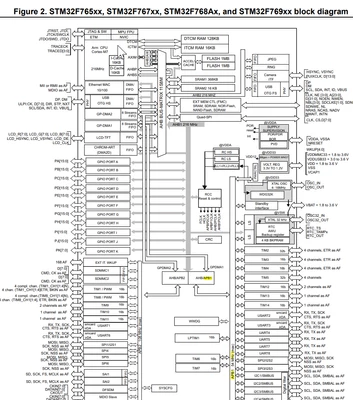

I set HCLK@216MHz and ran "Inference on target" using Nucleo-f767zi board with X-Cube-AI 8.1.0

I got these useful information:

1) duration : 0.017ms (17us) by sample (200 samples, I got a similar result (21us) by a single sample validation)

2) cycles/MACC : 10.41

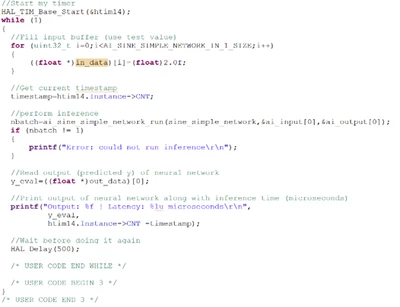

However, when I generated the code, edited the code like

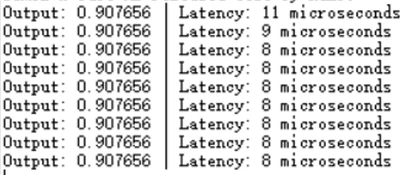

I built the project using X-Cube IDE 1.13.2. I got this:

duration: 8us / sample (prescaler set to 216)

Why is it significantly faster than that from the default "validate on target" button?

1) Why, in the same system configuration in the .ioc file, I got as much as x10 latency using this method?

2) Why, in contrast to the average 6-Cycle/MACC for Cortex-M7 in the manual handling float32 data, I got >10Cycle/MACC using Nucleo-F767zi@216MHz?

3) Why even a single dense layer using "validate on target" could generate a latency mounted to 8us, a large enough number that could match the mentioned bulit C project? Is this because the validation from the BUTTON taken into account extra data read/write latency?