Batch Inference in X-XUBE-AI 9.0.0 (STM32CubeAI)

- December 12, 2024

- 1 reply

- 2379 views

I am using X-CUBE-AI 9.0.0 in my project and need to run a quantized mobilenet_v2.0.35 model (.tflite). The model takes 4*56*56*3 (Batch * width * height * channel) input, but I am unable to find a way to run it with that input shape. The generated code sets input _1 size to 56*56*3 bytes only. I can't figure out how to provide batch input.

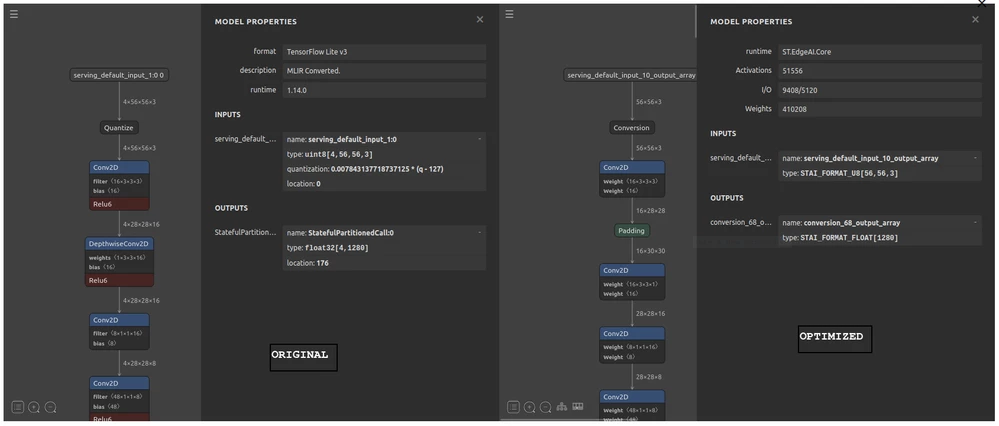

I am using STM32CubeAI runtime. I also see differences in input/output parameters between the original model and the one optimized by X-CUBE-AI.

I'd highly appreciate any guidance about how to run inference using batches.

I am attaching the Netron screenshot of the comparison below:

Comparison between original (left) and optimized model

Comparison between original (left) and optimized model

EDIT:

In the generated report file, I can see batch size 4 is used in the layer breakdown table. But input and output size don't show that.

-----------------------------------------------------------------------------------------------------------------

input 1/1 : 'serving_default_input_10', uint8(1x56x56x3), 9.19 KBytes, QLinear(0.007843138,127,uint8), user

output 1/1 : 'conversion_68', f32(1x1280), 5.00 KBytes, user ------ -------------------------------------------- ----------------------

m_id layer (type,original) oshape

------ -------------------------------------------- ----------------------

0 serving_default_input_10 (Input, ) [b:4,h:56,w:56,c:3]

conversion_0 (Conversion, QUANTIZE) [b:4,h:56,w:56,c:3]

------ -------------------------------------------- ----------------------

I have also attached the generated report text file.