Solved

This topic has been closed for replies.

Hello

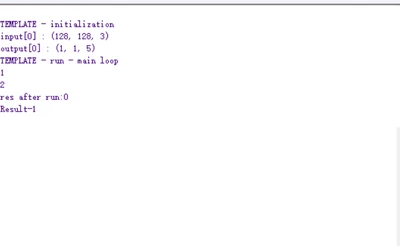

this is due to the quantization of the model as explained in the UM2526 section 1.3:

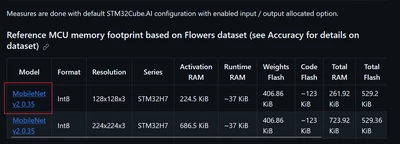

- The code generator quantizes weights and bias, and associated activations from floating point to 8-bit precision.

These are mapped on the optimized and specialized C implementation for the supported kernels.

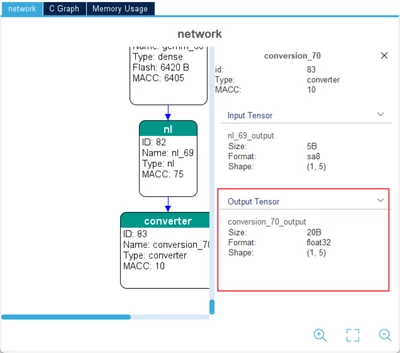

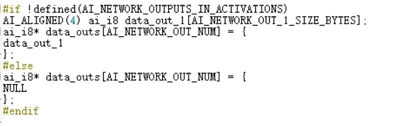

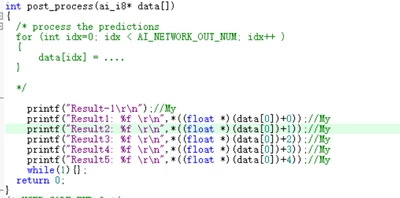

Otherwise, the floating-point version of the operator is used and float-to-8-bit and 8-bit-to-float convert operators

are automatically inserted. - The objective of this technique is to reduce the model size while also improving the CPU and hardware accelerator latency (including power consumption aspects) with little degradation in model accuracy.

so to improve CPU and hardware accelerator latency this conversion from float to the ai_i8 is needed.

Hope this answers your request .

BR

Enter your E-mail address. We'll send you an e-mail with instructions to reset your password.