Associate II

February 26, 2024

Solved

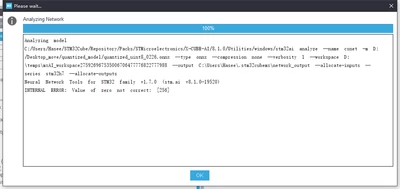

INTERNAL ERROR: Value of zero not correct

- February 26, 2024

- 2 replies

- 3493 views

Hello, everyone! I'm learning to deploy my target detection model using cubeai. My model is trained using pytorch and I found that cubeai doesn't support .pth file conversion so I'm trying to convert my model to onnx format. Since the size of my model did not match the requirements of the development board, I performed a quantization operation. Noting that cubeai officially recommends quantization in onnx format, I did so. However, when I analyze the model with cubeai, the following error occurs (the model before quantization can be analyzed normally). Can anyone help me to see what's going on?