Issues encountered when using STCubeAI

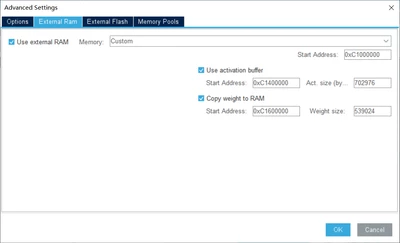

I want to run AI machine vision applications using STCubeAI. After training the model with TensorFlow components, I obtained a tflite file. Then, I imported and analyzed the model using the CubeAI toolkit in STM32CubeMX, and subsequently generated CubeAI C code. The first strange phenomenon I encountered here is that, although I have configured the use of external RAM and external flash and specified addresses in the CubeAI section of CubeMX, the compiler still reports insufficient RAM during compilation in Keil. This indicates that the external RAM settings I configured in CubeAI did not take effect.

Despite setting the usage of external RAM as mentioned above, the generated code still throws an error indicating insufficient RAM

So I had to use the 'attribute' keyword to specify that some variables used by CubeAI should be stored in external RAM, which is inefficient and error-prone.

Then the program compiled successfully. However, at runtime, the program triggered a hard fault at the 'return ai_platform_network_process(network, input, output);' line and further debugging was not possible. By examining the call stack, it was evident that the issue occurred at 'st_sssa8_ch_convolve_rank1upd'. How should I troubleshoot this problem? A common suggestion is to confirm if CRC is enabled. The answer is yes, of course; STM32CubeMX now automatically enables CRC when using CubeAI.

/* Global handle to reference the instantiated C-model */

static ai_handle network = AI_HANDLE_NULL;

/* Global c-array to handle the activations buffer */

AI_ALIGNED(32)

static ai_u8 activations[AI_NETWORK_DATA_ACTIVATIONS_SIZE]__attribute__((at(0XC1200000))); //

/* Array to store the data of the input tensor */

AI_ALIGNED(32)

static ai_float in_data[AI_NETWORK_IN_1_SIZE]__attribute__((at(0XC1A00000))); //

/* or static ai_u8 in_data[AI_NETWORK_IN_1_SIZE_BYTES]; */

/* c-array to store the data of the output tensor */

AI_ALIGNED(32)

static ai_float out_data[AI_NETWORK_OUT_1_SIZE];

/* static ai_u8 out_data[AI_NETWORK_OUT_1_SIZE_BYTES]; */

/* Array of pointer to manage the model's input/output tensors */

static ai_buffer *ai_input;

static ai_buffer *ai_output;

int aiInit(void) {

ai_error err;

/* Create and initialize the c-model */

const ai_handle acts[] = { activations };

err = ai_network_create_and_init(&network, acts, NULL);

if (err.type != AI_ERROR_NONE) {};

/* Reteive pointers to the model's input/output tensors */

ai_input = ai_network_inputs_get(network, NULL);

ai_output = ai_network_outputs_get(network, NULL);

return 0;

}

int aiRun(const void *in_data, void *out_data) {

ai_i32 n_batch;

ai_error err;

/* 1 - Update IO handlers with the data payload */

ai_input[0].data = AI_HANDLE_PTR(in_data);

ai_output[0].data = AI_HANDLE_PTR(out_data);

/* 2 - Perform the inference */

n_batch = ai_network_run(network, &ai_input[0], &ai_output[0]);

if (n_batch != 1) {

err = ai_network_get_error(network);

};

return 0;

}

void main_loop()

{

/* The STM32 CRC IP clock should be enabled to use the network runtime library */

__HAL_RCC_CRC_CLK_ENABLE();

aiInit();

while (1)

{

/* 1 - Acquire, pre-process and fill the input buffers */

//acquire_and_process_data(in_data);

memcpy(in_data, in_buf, OUT_HEIGHT*OUT_WIDTH*3);

/* 2 - Call inference engine */

aiRun(in_data, out_data);

/* 3 - Post-process the predictions */

//post_process(out_data);

}

}