Issues Integrating CRNN (Conv + GRU) Model in X-Cube-AI

- November 4, 2024

- 2 replies

- 2014 views

Hi community members,

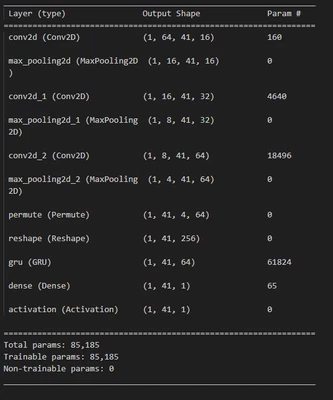

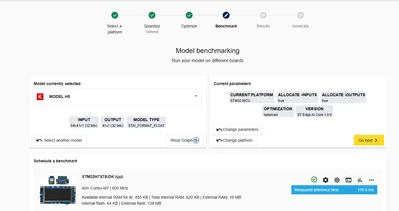

I’m currently working on a project where I want to deploy a Convolutional Recurrent Neural Network (CRNN) on an STM32 MCU using X-Cube-AI. The network structure includes convolutional layers followed by GRU layers, with a reshape and permute in between to convert the 4D tensor output to a 3D input tensor for the recurrent layer. Initially, I implemented this in PyTorch (exported to ONNX), and then tried TensorFlow Keras (exported to TFLite). Unfortunately, I’ve run into errors with both approaches during the import process in X-Cube-AI.

Error Messages

When importing the ONNX model from PyTorch, I received the following error message:

TOOL ERROR: Shape and shape map lengths must be the same: [192] vs. (CH_IN, CH)

I built the same architecture using TensorFlow Keras to see if this would work, then exported it as a TFLite model. However, importing this TFLite model into X-Cube-AI produced the following error:

INTERNAL ERROR: Inconsistent in/out tensor shapes in transpose_6_output: (H: 41, W: 4, CH: 64) and (H: 1, CH: 256)

Both errors seem to be related to the incorrect reshaping of dimensions. The GRU in the ONNX model has an input size of 256, hidden_size of 64, and is bidirectional. So the 192 comes from 3*hidden_size, which might give a clue on where and why this problem occurs. In the TFLite model, the dimensions seem to be mixed up. The GRU layer isn't supported in TFLite, so I had to unroll the GRU. I also have stateful=False, return_sequences=True, FYI.

Since both errors seem related to the reshaping, I think it is necessary to explain this part in more details.

Reshaping explained in detail

Both models take in a spectrogram input of size (1, 1, 64, 41), where the dimensions are (Batch, Channels, Height, Width) for PyTorch, and (1, 64, 41, 1) in Tensorflow with its channel last convention (Batch, Height, Width, Channels). After the convolution part, there are 64 channels, the height is reduced from 64 to 4, and the width stays the same. The GRU takes in a tensor of size (batch, timesteps, feature), so I permute the dimensions to have the order (batch, timesteps, height, channels) and group the height with the channels to obtain an output of (1, 41, 256) for the GRU layer.

I've attached the summaries of both models, as well as the model files for reproducibility!

I really appreciate any help or suggestions from the community, especially if you’ve faced similar issues or have experience with deploying CRNN models on STM32.

Thank you!

Have a wonderful day if you're reading this!