Solved

Matmul operation is converted to MCU target Convolution

- December 12, 2025

- 1 reply

- 552 views

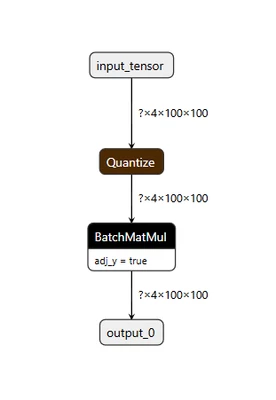

Hello. I am currently attempting to execute a MatMul operation on an NPU. I have implemented a simple TFLite file with a MatMul operation, as shown in the image below.

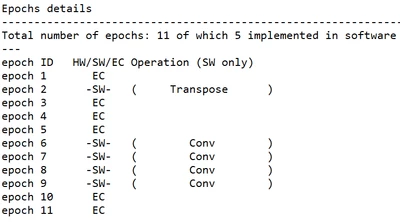

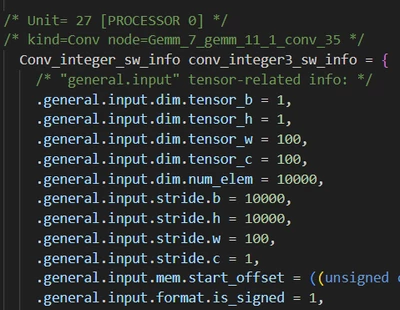

When converting the model using ST Edge AI, I observed that Matmul operator is mapped to the MCU instead of the NPU, and the operation is converted into a Convolution. Furthermore, checking the network.c file reveals that it is being converted into a convolution layer with an extremely large stride.

https://stm32ai-cs.st.com/assets/embedded-docs/stneuralart_operator_support.html states that the matmul(batch matmul) operator is supported on the ST Neural art Accelerator.

How can I resolve this issue? Thank you.