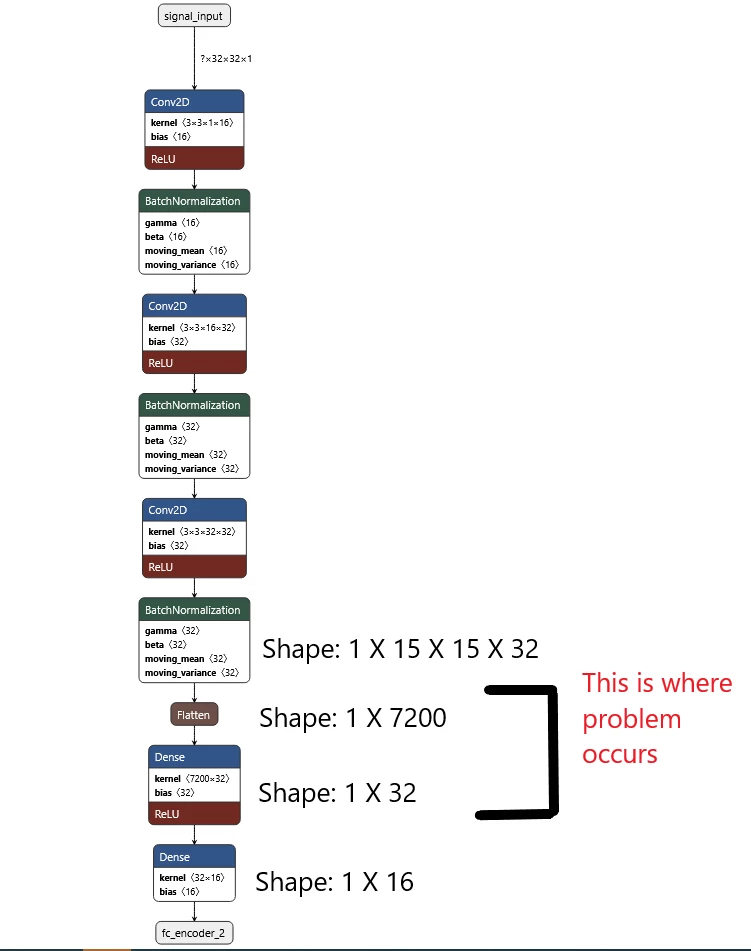

TOOL ERROR: operands could not be broadcast together with shapes (32,7200) (32,)

I have a convolutional model with convolutional layers bach normalization layers and Dense layers at the end. The model is converted to a tflite model. The inferencing works perfect on computer using tflite but When I try to deploy it on the nucleo h743zi2 I get this error.

I have a convolutional model with convolutional layers bach normalization layers and Dense layers at the end. The model is converted to a tflite model. The inferencing works perfect on computer using tflite but When I try to deploy it on the nucleo h743zi2 I get this error.

The network layers ans its shape look like it is shown in the pic. Has anyone come across this problem?

As far my understanding goes, I did not do wrong model creation. It is some bad interpretation from STM Cube library.

Additional Info: I am using STM Cube AI version 7.1.0

Thanks in advance

Rick