RTC Temperature Calibration -- 20ppm all temperatures feasible?

Good morning,

I'm developing a device with these requirements:

- STM32F446RET6

- No internet (NTP) or GPS connection

- Real-Time clock, set manually (so, the less often a user has to set it, the better)

- Outdoor -20°C~70°C temperature range (most installations in continental USA)

Ideally, we would like to get our total clock drift under 10 minutes/year (20 ppm) if possible.

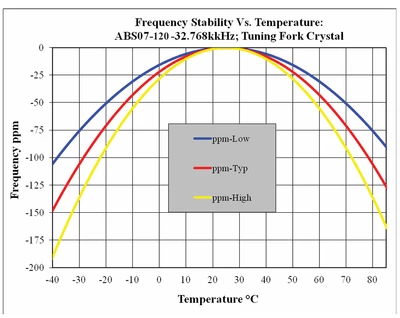

I created one prototype using an Abracon 32.768kHz crystal for the LSE, with two C0G 6pF load capacitors. To test the prototype, I routed the LSE to the MCO1 and measured the clock frequency as we swept the temperature from -20°C to 70°C in our temperature chamber. I measured that and compared the ppm deviation to the expected deviation given by abracon (ppm = (T-25°C)^2 * (-0.035ppm)):

| Time [wall clock time] | Ambient Temperature [°C] | measured MCO frequency [kHz] | ppm deviation | Predicted deviation (per abracon datasheet equation) |

| 10:10 | -20.9 | 32.76681 | -36.3 | -73.74 |

| 10:13 | -20.6 | 32.76672 | -39.1 | -72.78 |

| 10:24 | -11.9 | 32.76712 | -26.9 | -47.66 |

| 10:34 | -3.9 | 32.76750 | -15.3 | -29.23 |

| 10:45 | 4.2 | 32.76785 | -4.6 | -15.14 |

| 10:56 | 12.6 | 32.76809 | 2.7 | -5.38 |

| 11:06 | 19.8 | 32.76818 | 5.5 | -0.95 |

| 11:17 | 28.5 | 32.76820 | 6.1 | -0.43 |

| 11:28 | 36.2 | 32.76810 | 3.1 | -4.39 |

| 11:38 | 44.1 | 32.76785 | -4.6 | -12.77 |

| 11:49 | 52.2 | 32.76750 | -15.3 | -25.89 |

| 12:02 | 62.3 | 32.76685 | -35.1 | -48.70 |

| 12:14 | 70.5 | 32.76620 | -54.9 | -72.46 |

| 12:25 | 70.1 | 32.76598 | -61.6 | -71.19 |

| 12:54 | 70 | 32.76590 | -64.1 |

-70.88 |

So, my next steps that I was planning was to:

- Test various load capacitances to try to bring the room-temperature error as close to 0ppm as possible

- Use software calibration to modify `CALP` and `CALM` on-the-fly according to the ADC temperature, to counteract (1) the room-temperature ppm offset of the crystal and (2) the offset incurred by high and low temperatures (abracon equation).

However after doing some research, I'm a bit less confident about my approach

- The temperature slope I measured was different from the one given by the Abracon datasheet

- The measurement period for the RTC after calibration (32 seconds) is very wide, and I'm unsure if our equipment is suited for it

- I don't know how I will tune each device if the temperature slope differs for every crystal.

Is it feasible to tune a RTC like this to achieve 20ppm accuracy over a wide range of temperatures using only software? Or am I better off getting something like a temperature-controlled oscillator?

Thanks!