INTERNAL ERROR: Order of dimensions of input cannot be interpreted

- October 2, 2025

- 2 replies

- 481 views

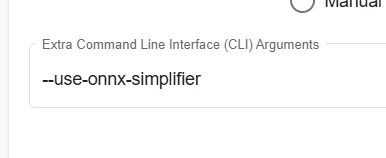

Im getting this error and dont understand the problem. I tried adding the suggested "--use-onnx-simplifier" from another post in the Web Command line but it didnt look like it took, here is the output:

>>> stedgeai analyze --model version-RFB-320.onnx --st-neural-art custom@/tmp/stm32ai_service/7f0992f6-31d1-46c4-892e-ecc0375878b5/profile-4de288e7-27c5-4d83-814b-a8f7038dc635.json --target stm32n6 --optimize.export_hybrid True --name network --workspace workspace --output output ST Edge AI Core v2.2.0-20266 2adc00962 WARNING: Unsupported keys in the current profile custom are ignored: memory_desc > memory_desc is not a valid key anymore, use machine_desc instead INTERNAL ERROR: Order of dimensions of input cannot be interpreted

I have also tried to use a transpose layer to order the inputs like [1,H, W, C] and that still caused this problem. Is there a way to have more verbose output then what i have, its not super helpful as to what to fix. I tried the transpose and spent lots of time figuring this out and to no evail, because it said it didnt like the order of the input. Please help, thanks.