Possible suboptimal STM32CubeAI Conv2D kernels? Errors for documented parameters?

Hi there, I'm not sure if it's better to leave a post here, or on github. If there is a preffered place, please let me know!

TL;DR

I'd appreciate any guidance on how to correctly configure the pad-values for 1-bit conv operations within X-CUBE-AI.

I appreciate those at ST may not be able to fully comment on the inner working of ST's kernel implementation, but I have a concern 1-bit convolutions may be implemented sub-optimally.

Problem and Setup

The problem I'm working on is generating a Binary Nerual Network (BNN). I've decided to trial the X-CUBE-AI framework for my company.

A terse description of my environment to replicate this is as follows (most of this should be irrelevant but just incase):

- OS: Mac OS 15.1

- Chip: M2 Pro

- Python: 3.11.8

- TensorFlow: 2.15.0

- Larq: 0.13.3

- X-CUBE-AI: 9.1.0

Simple Self-Contained Example

I've got a very simple python script called "test.py" (see below). It imports tensorflow and larq, and creates a very simple model, with a single larq binary 2D convolution (weights and inputs), where we take the sign of the inputs and the weights (e.g. go to the range [-1, 1]), to make a simple binary conv op. Note we have "same" padding, so there will typically be some padding at the edges.

import tensorflow as tf

import larq as lq

def build_model(pad_values: int, kernel_size: int = 3, stride: int = 1, out_channels: int = 16):

x = tf.keras.Input(shape=(32, 32, 1))

layer = lq.layers.QuantConv2D(

filters=out_channels,

kernel_size=(kernel_size, kernel_size),

kernel_quantizer="ste_sign",

input_quantizer="ste_sign",

use_bias=False,

strides=(stride, stride),

padding="same",

pad_values=pad_values,

groups=1

)

y = layer(x)

return tf.keras.Model(inputs=x, outputs=y)

print(tf.__version__)

model = build_model(pad_values=0) # <-- TRY VARYING THIS VALUE

# Save the model

model.save("test.h5")

The python script at the end then saves the model as a file. I have a second script (bash this time), called "test.sh", see below:

#!/bin/bash

set -e

# Ensure we always start from a new model

rm -f test.h5

# Create a model

python test.py

# Change this path if your install is at a different location

~/STM32Cube/Repository/Packs/STMicroelectronics/X-CUBE-AI/9.1.0/Utilities/macarm/stedgeai \

analyze \

--model test.h5 \

--target stm32 \

--type keras

Note how it calls the python test.py script to build the model, and then passes the model "test.h5" to the "stegeai" CLI.

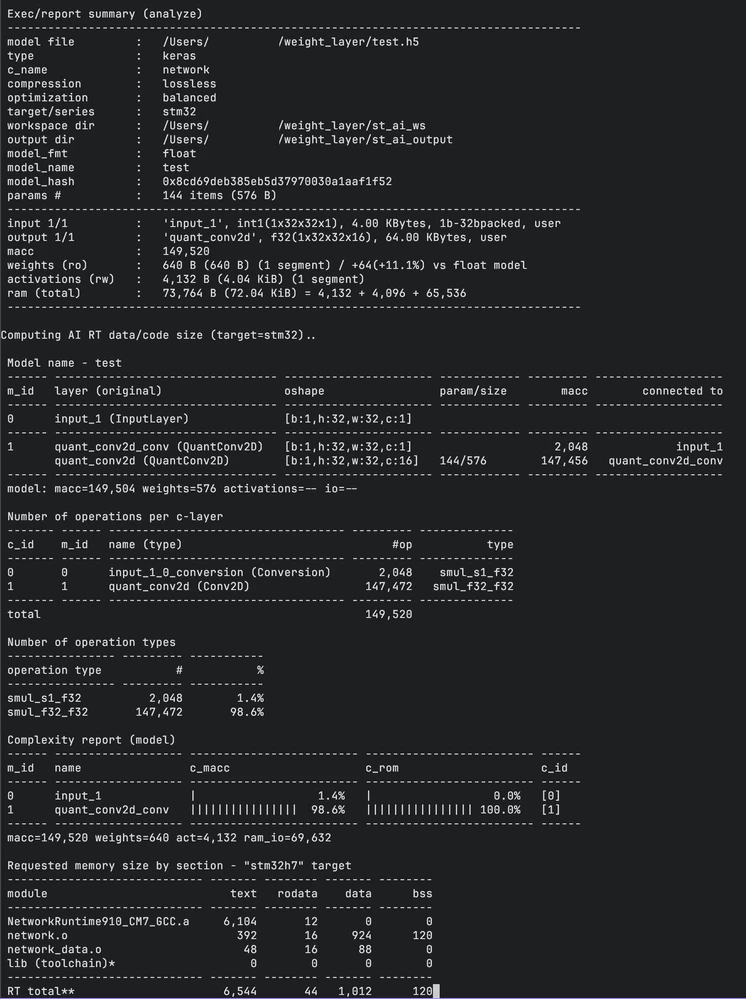

What I've noticed is that if the "pad_values" is 0, then "stedgeai" will work fine, then we can transpile from keras to the ST Edge internal framework successfully, and we'll see something like:

However, if I return to the Python script above, and modify pad_values to either -1 or 1, then we'll crash and burn! We'll see and error like:

NOT IMPLEMENTED: QuantConv2D (padded with 1) with formats {'out_0': (FLOAT, 32 bit, C Size: 32 bits), 'weights': (SIGNED, 1 bit, C Size: 1 bits Scales: [2.0] Zeros: [-0.5] Quantizer: UNIFORM), 'in_0': (SIGNED, 1 bit, C Size: 1 bits Scales: [2.0] Zeros: [-0.5] Quantizer: UNIFORM)} not supportedFor the record, I think this error message (and the multiple other errors I've worked through) leaves a little to be desired. However, this is not the objective of this post. But, just leaving some feedback - if you have a closed-source library - error messages are super crucial to help guide the users to a working solution. I only realised pad_values broke the conv op, through trial and error on a number of other parameters, (in my opinion) this would have been much clearer with better, more verbose error messages!

Why I Think There is a Problem

I've implemented a few binary convs along the way by hand, and as a start compared to a naive conv2d implementation, typically we'd use XNOR and popcount to efficiently do the actualy convolution, (aswell as leveraging GEMM via Im2Col to help improve performance).

What I don't understand here is, in my example above, my larq conv2d layer uses the sign operator to quantise, meaning we have [-1, 1] binary values, rather than [0, 1]. If we add zero-same-padding, e.g. pad the edges with zero, then the signal being fed to the keras operation is ternary [-1, 0, 1]. In keras, this is fine, training is being done in float32, so this probably doesn't matter.

But when we get to an efficient implementation in C, how are the 0 values being represented? The convolution ideally would convert [-1, 1] to [0, 1], and then go on it's merry way computing the conv with XNOR/popcount. But if we have ternary [-1, 0, 1] values, then I'm not sure what's going on in the kernel under the hood, nor whether it's optimal - read here for more on this topic.

Confusing Docs Don't Help Either

I'll be honest, I've found a number of discrepancies in the documentation and github examples (model zoo), aswell as missing documentation, which lead to difficulty in using the X-CUBE-AI framework.

I'm sharing this incase anyone at ST want's to improve these areas, or show me where I've misunderstood things/got it wrong (highly possible!)

ST Larq Docs

https://wiki.st.com/stm32mcu/wiki/AI:Deep_Quantized_Neural_Network_support#Supported_Larq_layers

See the above link, it writes on larq Conv2D:

for binary quantization, 'pad_values=-1 or 1' is requested if 'padding="same"'

Here I can't get pad values -1 or 1 to work with "stedgeai" at all. This documentation suggests the writer thought -1 or 1 are required if padding is "same" (which I've set), so I find this documentation incorrect.

ST Model Zoo Code

One of the things I did when trying to interpret how "stedgeai" deals with binary convs was search for all use of larq in the model zoo repo. I'm really confused how this code here suggests we can use pad_values=1, where as I've demonstrated in my example this causes an obscure error.

Question(s)

- So what's going on here?

- Why does the model zoo point to 1 padding values being okay, but "stedgeai" failing on this?

- What is happening if we pad to zero under the hood for a 1-bit convolution for the ST Micro kernels?

Thanks in advance, and I hope this hasn't come across too negative about the framework, I'm just keen to test out the most optimised neural networks with this framework and could do with a hand!