Question about RSQRT implementation on STM32N6 Neural-ART NPU

Hello ST Support Team,

I am using STM32N6 with Neural-ART NPU (ST Edge AI 3.0) and comparing inference outputs between:

1. TFLite on PC, and

2. the converted model running on STM32N6 NPU.

Could you please clarify how `rsqrt` is implemented on STM32N6 NPU?

I would like to know:

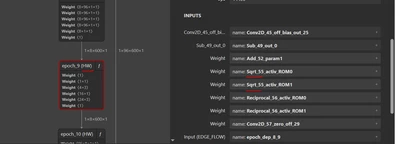

1. Is `rsqrt` a native HW operator, or is it decomposed (for example into `sqrt + reciprocal`)?

2. What approximation method is used internally (LUT / polynomial / Newton-Raphson / other)?

3. What numeric format is used in the HW path (fixed-point details, precision)?

4. What rounding and saturation rules are applied?

5. Are there documented error bounds or expected max/mean error vs float reference?

6. Is there any compiler/runtime option to select a more accurate vs faster mode for this operation?

My model uses INT8 I/O and the same input tensor on both platforms.

Thank you.