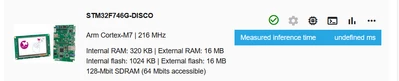

ST Edge AI Developer Cloud Benchmark not working with STM32F746G-DISCO

Hello! I am working with the developer cloud to benchmark some models' inference speed in a controlled environment. It was working well for the STM32F746G-DISCO board until a couple of days ago, when it just kept saying that my model has a measured inference time of "undefined ms."

I was wondering if this issue was known and whether it was possible to benchmark the inference time of my model locally on my board with the same environment as the developer cloud. I am aware of the local benchmarking tool in the ModelZoo services, but it seems to only give the estimated memory footprints, not the inference time.

Thank you!