Why Are Some Layers Executed in Software with X-CUBE-AI on STM32N6570-DK

Hello,

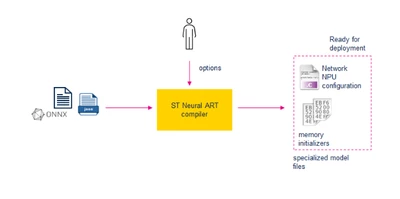

I'm working with the STM32N6570-DK board and using X-CUBE-AI to run quantized neural networks converted from ONNX models.

I'm trying to better understand which parts of the model are executed in software vs. hardware, specifically on this board, and what determines that split.

My Observations:

1. ResNet-8 Model (custom quantized)

When I validated a quantized ResNet-8 model, the output during target validation showed:

Total number of epochs: 16 of which 2 implemented in software

epoch ID HW/SW/EC Operation (SW only)

epoch 15 -SW- ( Softmax )

epoch 16 -SW- ( DequantizeLinear )

So, almost everything was offloaded to hardware, except for the final softmax and dequantization — which I expected.

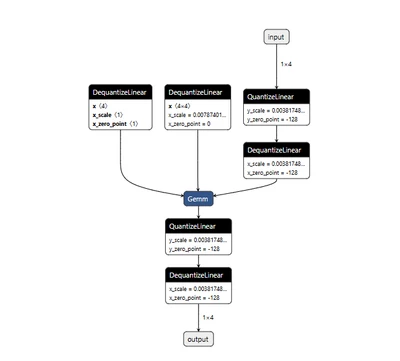

2. Simple Model Quantized via ST Developer Cloud

However, when I tested a very simple model (shown above), quantized through the ST Developer Cloud, I saw this:

Total number of epochs: 5 of which 3 implemented in software

epoch ID HW/SW/EC Operation (SW only)

epoch 2 -SW- ( DequantizeLinear )

epoch 3 -SW- ( Conv )

epoch 4 -SW- ( QuantizeLinear )

Here, even Conv was executed in software, despite the model being quantized.

My Questions:

Why does Conv2D run in software in the second case, but in hardware in the ResNet-8 case?

Does the hardware accelerator require a certain layer structure, padding style, kernel size, or input alignment to be offloaded properly?

Is there a way to force or hint that a layer should run in hardware if it technically can?

What are the conditions under which DequantizeLinear and QuantizeLinear run in hardware?

Thanks a lot