X-CUBE-AI can't analyze model

Hello,

I'm trying to convert my keras model for STM32N6.

CubeMX Version: 6.15.0

X-Cube-ai Version: 10.2.0

The code for my model:

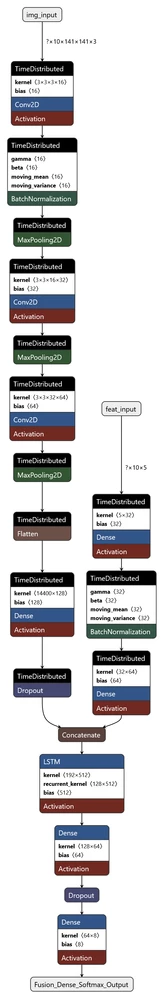

img_input = Input(shape=(10, 141, 141, 3), name='img_input')

# --- CNN branch applied per-frame via TimeDistributed ---

cx = layers.TimeDistributed(layers.Conv2D(16, (3, 3), activation="relu"), name="CNN_Conv1")(img_input)

cx = layers.TimeDistributed(layers.BatchNormalization(), name="CNN_Batch_Norm")(cx)

cx = layers.TimeDistributed(layers.MaxPooling2D(2, 2), name="CNN_Pool1")(cx)

cx = layers.TimeDistributed(layers.Conv2D(32, (3, 3), activation="relu"), name="CNN_Conv2")(cx)

cx = layers.TimeDistributed(layers.MaxPooling2D(2, 2), name="CNN_Pool2")(cx)

cx = layers.TimeDistributed(layers.Conv2D(64, (3, 3), activation='relu'), name="CNN_Conv3")(cx)

cx = layers.TimeDistributed(layers.MaxPooling2D(2, 2), name="CNN_Pool3")(cx)

cx = layers.TimeDistributed(layers.Flatten(), name="CNN_Flatten")(cx)

cx = layers.TimeDistributed(layers.Dense(128, activation='relu'), name="CNN_Dense")(cx)

cx = layers.TimeDistributed(layers.Dropout(0.2), name="CNN_Dropout")(cx)

# --- Feature branch ---

feat_input = Input(shape=(10, 5), name='feat_input')

fx = layers.TimeDistributed(layers.Dense(32, activation='relu'), name="Feature_Dense1")(feat_input)

fx = layers.TimeDistributed(layers.BatchNormalization(), name="Feature_Batch_Norm")(fx)

fx = layers.TimeDistributed(layers.Dense(64, activation='relu'), name="Feature_Dense2")(fx)

# --- Fusion ---

combined = layers.Concatenate(name="Fusion_Concat")([cx, fx])

# LSTM

combined = layers.LSTM(128, name="Fusion_LSTM")(combined)

# Final MLP layers

combined = layers.Dense(64, name="Fusion_Dense", activation="relu")(combined)

combined = layers.Dropout(0.4, name="Fusion_Dropout")(combined)

output = layers.Dense(len(class_names), activation='softmax', name="Fusion_Dense_Softmax_Output")(combined)

model = models.Model(inputs=[img_input, feat_input], outputs=output)

optimizer = optimizers.Adam(learning_rate=1e-4)

model.compile(optimizer, loss='categorical_crossentropy', metrics=['accuracy'])

model.summary()Attached are the neutron graphs for the .h5 model and the converted onnx model

Conversion via:

def convert_onnx():

model = tf.keras.models.load_model("radar_classifier.h5")

spec = (

tf.TensorSpec([1, 10, 141, 141, 3], tf.float32, name="img_input"),

tf.TensorSpec([1, 10, 5], tf.float32, name="feat_input")

)

onnx_model, _ = tf2onnx.convert.from_keras(model, input_signature=spec, opset=13)

onnx.save_model(onnx_model, "radar_classifier.onnx") onnx

onnx h5

h5

But during analyzing i get the following error for the .h5 model (model input type: Keras, with STM32Cube.AI MCU runtime):

NOT IMPLEMENTED: Unsupported wrapped layer type: BatchNormalization

Also, if i skip the BatchNormalization the analyzing works, but my results when training the model are worse, but acceptable. The issue is still, that the model does not get optimized/quantized and i cannot find information why this is.It would net over 14Mib for activations, almost 8Mib for weights and over 490 million MACC.

During analyzing for the onnx model (model input type: ONNX, with STM32Cube.AI Neural-ART runtime):

INTERNAL ERROR: Found transpose model_CNN_Pool3_max_pooling2d_2_MaxPool__680 before {LSTM__280} with remapping (0, 2, 3, 1)

I am quite new to this topic, any help is appreciated! Thanks!

Best regards

Dominik