ADC static non linearity correction using FFT harmonic analysis.

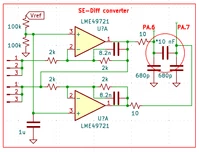

Described below method is intended to perform correction of the static ADC’s non-linearity in software using LUT. It requires pure sine-wave source only, that can be generated by any available analog circuitry.

Basically, it corrects non linearity induced by signal conversion analog front end all together, that includes ADC and it’s drivers, signal conditioning / amplification stages, inputs protection, etc.

There is a way to do the same linearity correction by applying linear ramp voltage and generating error LUT table. New method is using sine-wave, and consequently has a few advantages over “classic” :

1. Linearity of the sine-wave is much more easier to verify down to -140 -160dB (0.1 – 0.01 ppm) using simple tween-T notch filter.

2. Analog front end may include DC blocking capacitors.

3. Calibration test signal could function in the “working band” area, and some complex dynamic distortion of the drivers (due to fall of the AOL over frequency, output stage “compression” close to power rails, varying input offset over CM) would be corrected as well.

Software part

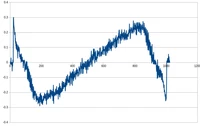

is simple: FFT produces spectrum components, than everything is zeroing except bins associated with main frequency (real & imaginary part). Doing i-FFT in reverse single tone is re-created. It preserves magnitude and phase of the original signal, and it has no distortion what's ever. In essence, this process likely be called “sine-wave regression”, if I’m not mistaken. Same way as linear or exponential regression generates mathematically well described curve to presented set of noisy /distorted array of data. Next stage is subtraction this sine-wave out of input data, leaving errors. All what is left, to define a table of “segments” where each error happened and create LUT of errors.

After calibration, each ADC sample evaluated over segments and associated error sample out of LUT simply subtracted.

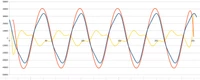

Pictures help to understand all processes.

Proof of the concept

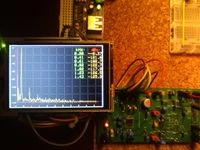

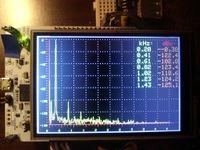

was done on nucleo-stm32G474re board. It has 12-bits ADC capable to do oversampling and run in differential mode. Both features were activated, OVS = 64, sampling rate 2.8 Msps drops to 43.75 ksps after OVS. 4096-FFT Split Radix, DIT and DIF for reverse part.

Segments table 10-bits. All software including calibration subroutine and SA part (shown on LCD attached) was running on the same G474re.

Over 20 dB improvement for 3-rd harmonic demonstrated. That’s more than ten times, bringing INL from the initial typical ~2 LSB down to less than 0.2 LSB.

Simulation of the method itself in pure software shows different efficiency vs distortion level, starting from 70 dB improvement for highly distorted signals (THD -20 dB), than stamina is proportionally decreasing for ultra low distorted signals, low limit about -150 dB using float math. Double precision was not estimated.

Sine-wave for calibration was generated using very high Q-2000 selective filter, purity was confirmed down to -120 dB by another SA. Tests conducted on two frequencies 200 Hz and 2 kHz shows similar results.

Stability verified, calibrated board (with LUT stored in the flash memory) effectively demonstrated the same low distortion spectrum when powered on the next day.

Limitation of the Demo project.

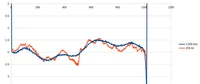

Hardware implementation in overall shows lower efficiency that software modeling (THD level -102 dB vs < -122 dB). That’s may be explained by complexity of the INL curve for SAR ADC. First of all, memory limitation doesn’t allow to run FFT more than 4k, and high noise level of the internal ADC forced me to activate OVS in order to get charts noise level below -110 dB.

Two INL curves for 2 kHz & 200 Hz test signals shows that OVS plays bad role “smoothing” all edges for higher frequency. I’m sure method can demonstrate better results for SD ADC, where INL curve looks different, w/o sharp edges.