Minimizing Current Consumption in High Voltage Divider Circuit

Hello everyone,

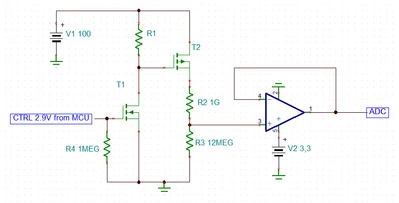

I'm working on a circuit for monitoring the bias voltage of a GM tube, which requires a high voltage of 100V. I am currently using a OA1NP22C op-amp configured as a unity-gain buffer to read the divided voltage. To minimize current consumption, I'm considering using a voltage divider with 1GΩ and 12MΩ resistors. However, this configuration still consumes approximately 9.8µA, which is higher than I would like for my low-power application.

Here is a simplified version of my circuit:

|

R1 (1GΩ)

|

+----> To OA1NP22C Op-Amp (unity gain buffer) > MCU

|

R2 (12MΩ)

|

GND

My questions are:

- How can I further reduce the current consumption of this voltage divider to the nanoampere range?

- Would switching out the voltage divider using a transistor be a viable solution? If so, what configuration and components would you recommend for achieving this?

- Are there alternative methods or components that could help in significantly reducing the power consumption while maintaining accurate voltage monitoring?

Any insights, suggestions, or examples of similar implementations would be greatly appreciated!

Thank you!

Additional Context:

- Control Signal: 2.9V from a microcontroller.

- Power Efficiency: Critical to keep the overall system power consumption as low as possible.

Feel free to ask for further details if needed.

Zaim