Calibrating the STM32's real-time clock (RTC)

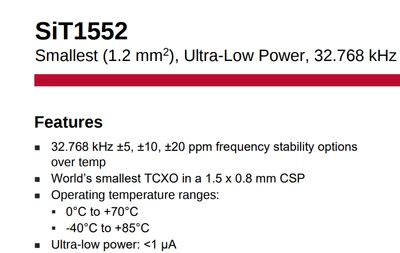

I have an STM32L011F4P6 with an RTC. It is running at 3.3V and 16 MHz off the internal HSI RC clock. The RTC is driven by a +/-20ppm 32.768 KHz crystal. It is running at room temperature (23-25C). After calibration, I am losing several seconds per day! What's wrong?

Here's more information:

I have a auto-calibration routine where it will output a 1Hz signal on PA2 and capture the rising edges using TIM2 channel 3, running at 16 MHz. I capture the start time (1st edge), I interrupt on overflow and add 65536 to a 32bit variable, and I capture the final (33rd) edge to get the total number of 16MHz clocks in 32 seconds according to the RTC. At the same time, I have a GPS module with a 1Hz output fed into PA10. I set up TIM21 channel 1 identically to the TIM2 setup, to capture the total number of 16MHz clocks in 32 seconds according to the GPS.

I take the two numbers and find the difference, not in PPM but in units of calibration (0.954 PPM).

I get a reasonable value of 17 (about 16 PPM), and I adjust the GPS clock accordingly.

I don't recall if it is +17 or -17 that it read, but the magnitude of the value seems reasonable.

This value is stored in EEPROM and loaded into the RTC on power up as well.

f_TempFloat = (float)(i32_RTCclockTotalCount - i32_GPSclockTotalCount);

f_TempFloat /= (float)i32_GPSclockTotalCount;

f_TempFloat *= 1048576;

if(f_TempFloat > 0)

{

i16_FactoryCalibrationValue = (int16_t)(f_TempFloat + 0.5f);

}

else

{

i16_FactoryCalibrationValue = (int16_t)(f_TempFloat - 0.5f);

}

if(i16_FactoryCalibrationValue > 511)

{

i16_FactoryCalibrationValue = 511;

}

else if (i16_FactoryCalibrationValue < -511)

{

i16_FactoryCalibrationValue = -511;

}

if(i16_FactoryCalibrationValue > 0)

{

u32_SmoothCalibPlusPulses = RTC_CALR_CALP;

u32_SmoothCalibMinusPulsesValue = 512 - i16_FactoryCalibrationValue ;

}

else

{

u32_SmoothCalibPlusPulses = 0x00000000u;

u32_SmoothCalibMinusPulsesValue = 0 - i16_FactoryCalibrationValue ;

}

//Disable write protection

RTC->WPR = 0xCA;

RTC->WPR = 0x53;

RTC->CALR = (uint32_t)((uint32_t)u32_SmoothCalibPeriod | (uint32_t)u32_SmoothCalibPlusPulses | (uint32_t)u32_SmoothCalibMinusPulsesValue);

//Enable write protection

RTC->WPR = 0xFE;

RTC->WPR = 0x64;

The clock is intended to operate outside, though so far I'm only testing at room temperature.

I am using the onboard temperature sensor to detect changes in temperature and adjust the calibration accordingly. The GPS calibration routine will always be done at room temperature, immediately after power up, so I calibrate the raw reading at that time to 25C.

During normal operation, the temperature sensor is read every 5 minutes and a temperature calibration factor is calculated. This factor is added to i16_FactoryCalibrationValue and the RTC calibration is updated.

#define XTAL_K_VALUE 0.034f // Frequency Temperature Curve value (ppm/C)

#define XTAL_T_VALUE 25.0f // Turnover Temperature value (C)

#define PPM_VALUE 0.953674f // Adjustment granularity

int16_t Get_Current_Temperature(void)

{

uint16_t u16_temp;

ADC->CCR |= ADC_CCR_TSEN; //Startup time <= 10uS

ADC1->CHSELR = LL_ADC_CHANNEL_TEMPSENSOR;

// Compute number of CPU cycles to wait for

uint32_t waitLoopIndex = (10 * (SystemCoreClock / 1000000U)); //10uS * number of instructions

while(waitLoopIndex != 0U)

{

waitLoopIndex--;

}

// Wait until End of unitary conversion or sequence conversions flag is raised

waitLoopIndex = (100 * (SystemCoreClock / 1000000U)); //100uS * number of instructions

ADC1->ISR |= ADC_ISR_EOC; //Clear End of conversion flag

ADC1->CR |= ADC_CR_ADSTART;

while( ((ADC1->ISR & ADC_ISR_EOC) != ADC_ISR_EOC) && (waitLoopIndex != 0U))

{

waitLoopIndex--;

}

ADC->CCR &= ~ADC_CCR_TSEN; //Turn off temp sensor

if(waitLoopIndex)

{ // Clear regular group conversion flag. It is cleared by software writing 1 to it or by reading the ADC_DR register.

ReadEEPROM(EEPROM_TEMPSENSOR_CAL1_ADDR, &u16_temp);

return (((int32_t)((ADC1->DR * ((uint32_t)(f_voltageRef * 1000.0f))) / TEMPSENSOR_CAL_VREFANALOG) - (int32_t)*TEMPSENSOR_CAL1_ADDR) * (int32_t)(TEMPSENSOR_CAL2_TEMP - TEMPSENSOR_CAL1_TEMP) / (int32_t)((int32_t)*TEMPSENSOR_CAL2_ADDR - (int32_t)*TEMPSENSOR_CAL1_ADDR) + TEMPSENSOR_CAL1_TEMP);

}

else

{

return -100; //Ignore this value

}

}

What is causing me to lose time?

I have already checked and confirmed that the temp sensor reads within 19-29C (24+/-5 C rounds to an adjustment factor of 0).

I have already checked that the adjustment is the correct direction by using a frequency generator. A slower external clock results in a slower calibrated RTC and vice versa.

Is it the frequent writes to the calibration register that are causing issues?